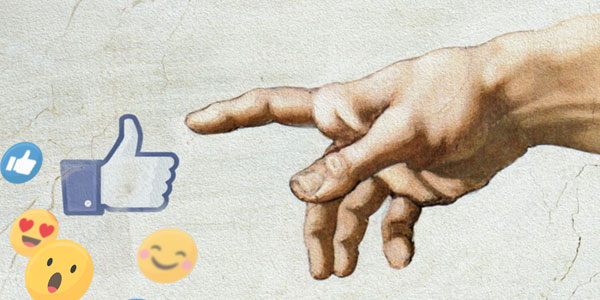

Social media regulation: Can we trust the tech giants?

- Ufuoma Akpojivi

Some scholars consider these ‘liberating technologies’ because they empower citizens to speak back to power and hold leaders accountable.

In postcolonial African countries, the impact of this liberating technology is evident from the Arab Spring, to #RhodesMustFall, #FeesMustFall, and OccupyGhana and Nigeria, when citizens have leveraged technologies to challenge inherent colonial and postcolonial issues.

Yet despite these benefits, concerns have been raised about the use of these liberating technologies for perpetuating online abuse through trolling, spreading misinformation, disinformation, and conspiracy theories, and for manipulating elections – as observed in Kenya and Nigeria by Cambridge Analytica.

These concerns are legitimate and worrying due to the consequences on citizens and threats to nation states. For instance, Thierry Henry, a former footballer and coach, recently announced that he will be quitting social media due to online abuse and that he will only consider re-joining when social media is regulated with the ‘same vigour and ferocity that copyright infringements are’.

Similarly, earlier this year, the world watched with shock how supporters of former US President Donald Trump stormed the Capitol in an attempt to overturn the November presidential election results. The former president himself was accused of inciting this incident through his posts on social media. These issues raise the question of how best to regulate these technologies such as Twitter, Facebook, etc.

Regulation vs freedom of speech

Freedom of speech is considered a universal human right and it is covered in most international treaties, including the Universal Declaration of Human Rights and the African Charter on Human Rights. Nation states are appraised based on their abilities to protect this inalienable human right.

However, this does not confer absolute [negative] freedom on every individual. Some philosophers argue that freedom is essential in any society because truth can only be derived from tolerance of different views. Furthermore, the democratic process is enriched as citizens can make an informed decision due to the market of ideas.

Conversely, they state that freedom of speech should only be regulated when national interests or security is at stake, bringing about the idea of positive freedom – which calls for some restraint or intervention – potentially from the state, since freedom is not absolute.

Weaponised communications

The idea of regulation as a restriction has always met with resistance and continues to generate complex debates. Nevertheless, regulation is needed to promote fair rules of engagement and check excesses from both the tech giants and the public. The state cannot always be trusted to equitably regulate the media or social media due to vested interests, for example, using regulation as a tool to curtail and suppress critical views and opposition.

Nation states including Ethiopia, Zimbabwe, Uganda and Cameroon have politicised and weaponised the internet to achieve their own objectives. Likewise, attempts by states to formulate social media legislation as happened in Uganda and Nigeria, have raised serious concerns over the end goal of such bills.

In the capitalist’s environment in which these technologies operate, tech giants cannot be trusted to effectively regulate platform content as they are either slow to take necessary action, or take excessive or disproportionate actions and/or responses, which threaten the very basis of freedom that such platforms are supposed to promote.

For instance, the recent banning of former president Donald Trump by social media platforms (Twitter, Facebook, YouTube, and Instagram) raises the question of who makes these decisions and if these decisions are in the public interest.

The rules of engagement

Is the decision to ban an individual from using these technologies or limiting/restricting news sharing by tech giants the solution? To address this problem a more robust approach is needed at both the governance and individual levels.

Firstly, the governance of tech giants and their content should not be left to corporate organisations. The telecommunication regulatory bodies of nation states should engage with tech giants to identify mechanisms that will help flag hateful, mis/disinformation and the like on these platforms.

This should go beyond reviewing regulatory decisions already made, and include the involvement in content moderation, formation, expansion, and empowerment of oversight across all platforms. Expanding the oversight board and their powers to content moderation and regulation will help address the bureaucratic imperative, highlight the complex framework and rules with which they work and build a structure that is transparent to all.

Secondly, there is need for rules of engagement on social media platforms. Just as in mainstream media, which has frameworks that govern engagement, there should be rules to which individuals who subscribe to social media adhere.

- Ufuoma Akpojivi is an Associate Professor and Head of the Department of Media Studies at Wits. He holds a PhD in Communications Studies from the University of Leeds, UK. His research interests are in the areas of media policy, democratisation, citizenship, new media, and activism and he has authored many academic articles in these areas. He is the recipient of the Wits Friedel Sellschop Research Award, amongst others, and many teaching awards.

- This article first appeared in Curiosity, a research magazine produced by Wits Communications and the Research Office.

- Read more in the 12th issue, themed: #Solutions. We explore #WitsForGood solutions to the structural, political and socioeconomic challenges that persist in South Africa, and we are encouraged by astounding ‘moonshot moments’ where Witsies are advancing science, health, engineering, technology and innovation.