How academics can counter ‘AI thinks, therefore I am’

- Martin Bekker

2023 will be remembered as the year that artificial intelligence (AI) – or, more specifically, large language models (LLMs), like ChatGPT – changed the world.

LLM-assisted writing will indelibly alter many writing tasks, offering speed and efficiency, and even automating-away many tasks. However, academic, scientific and intellectual integrity are at risk: not only due to mistakes that may creep in, but, more importantly, owing to the loss of the ability to construct well-crafted arguments.

Moreover, ethical principles around intellectual process and ownership ought to be protected against the vague accountability of black-box algorithms with respect to published or submitted work.

What has changed?

In existence for more than 40 years, language models are probabilistic models of a human language that can generate likelihoods of a series of words, based on a large collection of written or spoken texts on which they have been trained.

Over the past decade, the size of the collection of training texts and the number of weights between concepts held within the models have increased, necessitating affixing ‘large’ to recent models, now known as LLMs.

ChatGPT was released in November 2022, combining the then most-advanced LLM with a chatbot-interface, simplifying the process of requesting (prompting) and receiving responses.

Contemporary LLMs represent a break with the past, based on the speed and scale of information processing, unprecedented research assistance and the potential for the outsourcing of thought. Each of these aspects is briefly unpacked below.

Information processing

The scale and speed at which LLMs perform information processing tasks have now surpassed the human performance of certain tasks within the information economy, suggesting a quantum leap in functioning and utility.

Research assistance

The ability of transformer models – the deep-learning architecture behind LLMs – to manipulate language has made LLMs superlative at summarising texts, changing style and correcting spelling and grammar.

Moreover, several other AI-related tools that assist in the research process have recently been introduced. For instance, research summarising tools (eg: Elicit, Perplexity and Consensus) can ‘find’ work (published but unknown to the scholar), ‘understand’ discourses, identify research gaps and assist with literature reviews.

Other tools can manufacture artificial data. Here, a scholar might give instructions regarding what a data set should contain, and, in the absence of this being available (or impossible to gather, for, say, ethical reasons), such data can be ‘created’ instantly.

Outsourcing thinking

Cheating is nothing new, but LLMs present the academic world with a new level of concern. Writers are now able to use these to author academic work – from conceptualisation to research and writing. This puts in peril the principle of scientific advancement through human reasoning.

Perils notwithstanding, there are obvious benefits to tools that can fix the register, grammar and punctuation of a text in seconds and, apparently, at no cost to the user. This is especially the case for those writing in a second language – which applies to most academic scholars, who must publish in English. This levelling of the playing field is to be welcomed.

The immediate drawback, though, is that many things we have long battled to counter – dishonesty, cheating and plagiarism – have almost instantly become much harder to detect.

Moreover, LLMs are known to routinely produce credible untruths (‘hallucinations’, ‘simulated authority’ or ‘compelling misinformation’) and omit attributions of their source or training data (plagiarism).

With all of this in mind, the need for practical guidance through the ethical minefield of LLMs is clear.

What has remained constant?

It is comforting that, despite the advent of LLMs, most scientific principles endure.

First, the three values of beneficence, autonomy and justice – all tied to non-maleficence and the avoidance of suffering – stand firm. Right is still right, and wrong remains wrong.

Similarly, cheating and plagiarism remain anathema to the spirit of science, while openness, reproducibility and the sharing of data still stand as ideals.

An ethical guide to using LLMs

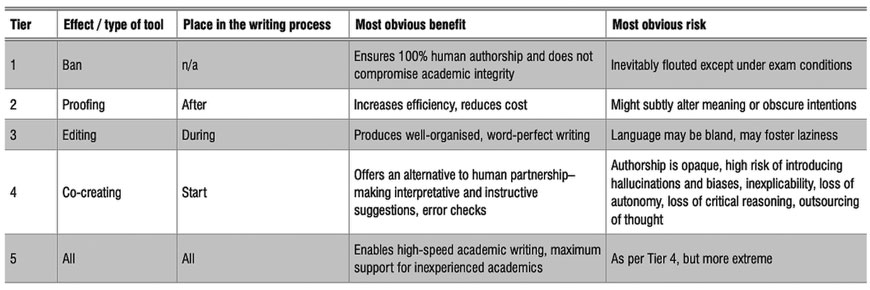

I propose a five-tier system to simplify thinking around permissions and prohibitions related to using LLMs for academic writing. While representing increasing ‘levels’ of LLM support that progress along a seeming continuum, the tiers, in fact, represent paradigmatically different types of mental undertakings.

The table below summarises these tiers, where they come into the writing process, and their most obvious benefits and risks. For more detail, see my original journal article.

Summary of large language model (LLM) permission tiers, where they come into the writing process, and their most obvious benefits and risks.

Tier 1 is techno-pessimistic. It assumes that technology, per se, represents a threat to knowledge production and human capabilities. This position is untenable for an academic journal already heavily reliant on LLMs (eg: in the form of spell-checkers), not to mention calculator-like technology.

In contrast, Tiers 2 and 3 may be regarded as technology-embracive. Optimistic about the efficiencies LLMs bring to human knowledge and scientific advancement, these tiers advocate for adoption, remove the first-language barriers so often inhibiting the global dissemination of great ideas, and may even expedite the writing-up process.

Tiers 4 and 5 are leaning towards AI hype – LLMs are either on the path to true cognitive supremacy and should, thus, be employed at all costs, or they will soon be so ubiquitous that any resistance to their use is bound to fail.

However, we cannot rule out a Tier 5 future, in which AI will become a colleague and co-author, and therefore, two inviolable principles merit further reflection: ownership and transparency.

Ownership

Ownership is the tenet that a submitted or published work remains the responsibility of a human author, who is the only accountable party for mistakes or other consequences.

In our scientific pursuit, it is the individual thinker who toils, weighs and risks. Even in the scenario in which a human and an LLM ‘co-create’ a work, the responsibility for the content still must rest somewhere, and, in the spirit of science, this would most obviously be the human author.

Transparency

Showing one’s work and thought process lies at the heart of scientific accountability, reproducibility, peer accessibility and public trust.

LLMs are by their nature opaque. That does not mean they cannot be used, but, rather, that we must be open about when and how we use them. The alternative scenario is that readers have to guess whether LLMs have been used in the production of texts, which would harm their credibility.

Neither hype nor despair

A pocket calculator’s answers are consistent, predictable, replicable, and regular. In this sense, LLMs are not like calculators: their massive size and black-box nature appear to have given them, at least by most accounts, ‘emergent’ capabilities.

The general public (and technical!) discourse has tended to label this as ‘generative’ – which is, all told, succumbing to AI hype.

However, the ability to distinguish helpful innovation from unhelpful hyperbole (whether AI saviourism or AI catastrophising) is important in order to keep humankind’s present problems and struggles in perspective, recognise our immediate moral duties, and rationally analyse the extent to which a new class of tools can help or hinder scientific progress and human betterment.

For scientists across every branch of knowledge, mental panic and ossification remain our nemeses. We gain most by seeing neither cataclysmic doom nor total redemption in technology, but, instead, recalibrating a new technology’s value based on what it can change, and what it can’t.

Martin Bekker is with the School of Electrical and Information Engineering at the University of the Witwatersrand in South Africa. This article, abridged by Desmond Thompson (a real human), is based on Bekker’s commentary in the South African Journal of Science on 30 January 2024.